With ARPANET, Robert Taylor and Larry Roberts intended to connect many different research institutions, each hosting its own computer, for whose hardware and software it was wholly responsible. The hardware and software of the network itself, however, lay in a nebulous middle realm, belonging to no particular site. Over the course of the years 1967-1968, Roberts, head of the networking project for ARPA’s Information Processing Techniques Office (IPTO), had to determine who should build and operate the network, and where the boundary of responsibility should lie between the network and the host institutions.

The Skeptics

The problem of how to structure the network was at least as much political as technical. The principal investigators at the ARPA research sites did not, as a body, relish the idea of ARPANET. Some evinced a perfect disinterest in ever joining the network; few were enthusiastic. Each site would have to put in a large amount of effort to in order to let others share its very expensive, very rare computer. Such sharing had manifest disadvantages (loss of a precious resource), while its potential advantages remained uncertain and obscure.

The same skepticism about resource sharing had torpedoed the UCLA networking project several years earlier. However, in this case, ARPA had substantially more leverage, since it had directly paid for all those precious computing resources, and continued to hold the purse strings of the associated research programs. Though no direct threats were ever made, no “or else,” issued, the situation was clear enough – one way or another ARPA would build its network, to connect what were, in practice, still its machines.

Matters came to a head at a meeting of the principal investigators in Ann Arbor, Michigan, in the spring of 1967. Roberts laid out his plan for a network to connect the various host computers at each site. Each of the investigators, he said, would fit their local host with custom networking software, which it would use to dial up other hosts over the telephone network (this was before Roberts had learned about packet-switching). Dissent and angst ensued. Among the least receptive were the major sites that already had large IPTO-funded projects, MIT chief among them. Flush with funding for the Project MAC time-sharing system and artificial intelligence lab, MIT’s researchers saw little advantage to sharing their hard-earned resources with rinky-dinky bit players out west.

Regardless of their stature, moreover, every site had certain other reservations in common. They each also had their own unique hardware and software, and it was difficult to see how they could even establish a simple connection with one another, much less engage in real collaboration. Just writing and running the networking software for their local machine would also eat up a significant amount of time and computer power.

It was ironic yet surprisingly fitting that the solution adopted by Roberts to these social and technical problems came from Wes Clark, a man who regarded both time-sharing and networking with distaste. Clark, the quixotic champion of personal computers for each individual, had no interest in sharing computer resources with anyone, and kept his own campus, Washington University in St. Louis, well away from ARPANET for years to come. So it is perhaps not surprising that he came up with a network design that would not add any significant new drain on each site’s computing resources, nor require those sites to spend a lot of effort on custom software.

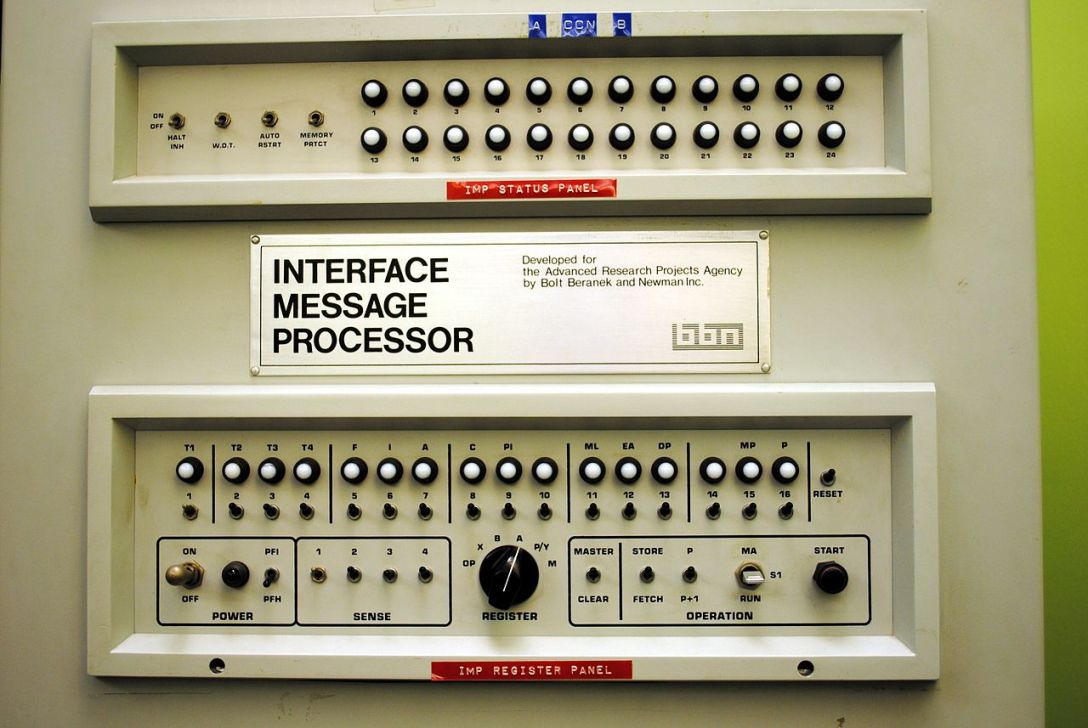

Clark proposed setting up a mini-computer at each site which would handle all the actual networking functions. Each host would have to understand only how to connect to its local helpmate (later dubbed an Interface Message Processor, or IMP), which would then route on the message so that it reached the corresponding IMP at the destination. In effect, he proposed that ARPA give an additional free computer to each site, which would absorb most of the resource costs of the network. At a time when computers were still scarce and very dear, the proposal was an audacious one. Yet with the recent advent of mini-computers that cost just tens of thousands of dollars rather than hundreds, it fell just this side of feasible.1

While alleviating some of the concerns of the principal investigators about a network tax on their computer power, the IMP approach also happened to solve another political problem for ARPA. Unlike any other ARPA project to date, the network was not confined to a single research institution where it could be overseen by a single investigator. Nor was ARPA itself equipped to directly build and manage a large-scale technical project. It would have to hire a third party to do the job. The presence of the IMPs would provide a clear delineation of responsibility between the externally-m network and the locally-managed host computer. The contractor would control the IMPs and everything between them, while the host sites would each remain fully (and solely) responsible for the hardware and software on their own computer.

The IMP

Next, Roberts had to choose that contractor. The old-fashioned Licklider approach of soliciting a proposal directly from a favored researcher wouldn’t do in this case. The project would have to be put up for public bid like any other government contract.

It took until July of 1968 for Roberts to prepare the final the details of the request for bids. About a half year had elapsed since the final major technical piece of the puzzle fell into place, with the revelation of packet-switching at the Gatlinburg conference. Two of the largest computer manufacturers, Control Data Corporation (CDC) and International Business Machines (IBM), immediately bowed out, since they had no suitable low-cost minicomputer to serve as the IMP.

Among the major remaining contenders, most chose Honeywell’s new DDP-516 computer, though some plumped instead for the Digital PDP-8. The Honeywell was especially attractive because it featured an input/output interface explicitly design to interact with real-time systems, for applications like controlling industrial machinery. Communications, of course, required similar real-time precision – if an incoming message were missed because the computer was busy doing other work, there was no second chance to capture it.

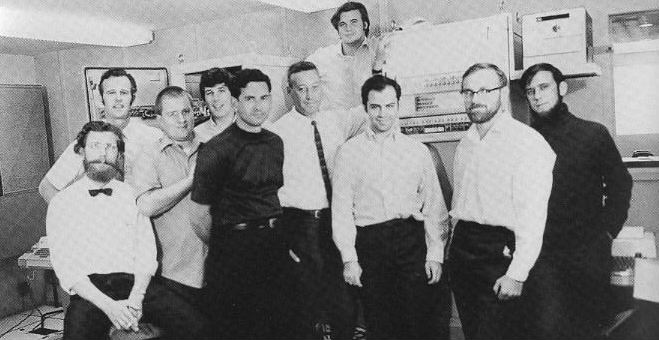

By the end of the year, after strongly considering Raytheon, Roberts offered the job to the growing Cambridge firm of Bolt, Beranek and Newman. The family tree of interactive computing, was, at this date, still extraordinarily ingrown, and in choosing BBN Roberts might reasonably have been accused of a kind of nepotism. J.C.R. Licklider had brought interactive computing to BBN before leaving to serve as the first director of IPTO, seed his intergalactic network, and mentor men like Roberts. Without Lick’s influence, ARPA and BBN would have been neither interested in nor capable of handling the ARPANET project. Moreover, the core of the team assembled by BBN to build the IMP came directly or indirectly from Lincoln Labs: Frank Heart (the team’s leader), Dave Walden, Will Crowther, and Severo Ornstein. Lincoln, of course, is where Roberts himself did his graduate work, and where a chance collision with Wes Clark had first sparked Lick’s excitement about interactive computing.

But cozy as the arrangement may have seemed, in truth the BBN team was as finely tuned for real-time performance as the Honeywell 516. At Lincoln, they worked on computers that interfaced with radar systems, another application where data would not wait for the computer to be ready. Heart, for example, had worked on the Whirlwind computer as a student as far back as 1950, joined the SAGE project, and spent a total of fifteen years at Lincoln Lab. Ornstein had worked on the SAGE cross-telling protocol, for handing off radar track records from one computer to another, and later on Wes Clark’s LINC, a computer designed to support scientists directly in the laboratory, with live data. Crowther, now best known as the author of Colossal Cave Adventure, spent ten years building real-time systems at Lincoln, including the Lincoln Experimental Terminal, a mobile satellite communications station with a small computer to point the antenna and process the incoming signals.2

The IMPs were responsible for understanding and managing the routing and delivery of messages from host to host. The hosts could deliver up to 8000 bytes at a time to their local IMP, along with a destination address. The IMP then sliced this into smaller packets which were routed independently to the destination IMP, across 50 kilobit-per-second lines leased from AT&T. The receiving IMP reassembled the pieces and delivered the complete message to its host. Each IMP kept a table that tracked which of their neighbors offered fastest route to reach each possible destination. This was updated dynamically based on information received from those neighbors, including whether they appeared to be unavailable (in which case the delay in that direction was effectively infinite). To meet the speed and throughput requirements specified by Roberts for all of this processing, Heart’s team crafted little poems in code. The entire operating program for the IMP required only about 12,000 bytes; the portion that maintained the routing tables only 300.3

The team also took several precautions to address the fact that it would be infeasible to have maintenance staff on site with every IMP.

First, they equipped each computer with remote monitoring and control facilities. In addition to an automatic restart function that would kick in after power failure, the IMPs were programmed to be able to restart their neighbors by sending them a fresh instance of their operating software. To help with debugging and analysis, an IMP could be instructed to start taking snapshots of its state at regular intervals. The IMPs would also honor a special ‘trace’ bit on each packet, which triggered additional, more detailed logs. With these capabilities, many kinds of problems could be addressed from the BBN office, which acted as a central command center from which the status of the whole network could be overseen.

Second, they requisitioned from Honeywell the military-grade version of the 516 computer, equipped with a thick casing to protect it from vibration and other environmental hazards. BBN intended this primarily as a “keep out” sign for curious graduate students, but nothing delineated the boundary between the hosts and the BBN-operated subnet as visibly as this armored shell.

The first of these hardened cabinets, about the size of a refrigerator, arrived on site at the University of California, Los Angeles (UCLA) on August 30, 1969, just 8 months after BBN received the contract.

The Hosts

Roberts decided to start the network with four hosts – in addition to UCLA, there would be an IMP just up the coast at the University of California, Santa Barbara (UCSB), another at Stanford Research Institute (SRI) in northern California, and the last at the University of Utah. All were scrappy West Coast institutions looking to establish themselves in academic computing. The close family ties also continued, as two of the involved principal investigators, Len Kleinrock at UCLA and Ivan Sutherland at the University of Utah, were also Roberts’ old office mates from Lincoln Lab.

Roberts also assigned two of the sites special functions within the network. Doug Englebart of SRI had volunteered as far back as the 1967 principals meeting to set up a Network Information Center. Leveraging SRI’s sophisticated on-line information retrieval system, he would compile the telephone directory, so to speak, for ARPANET: collating information about all the resources available at the various host sites and making it available to everyone on the network. On the basis of Kleinrock’s expertise in analyzing network traffic, meanwhile, Roberts designated UCLA as the Network Measurement Center (NMC). For Kleinrock and UCLA, ARPANET was to serve not only as a practical tool but also as an observational experiment, from which data could be extracted and generalized to learn lessons that could be applied to improve the design of the network and its successors.

But more important to the development of ARPANET than either of these formal institutional designations was a more informal and diffuse community of graduate students called the Network Working Group (NWG). The sub-net of IMPs allowed any host on the network to reliably deliver a message to any other; the task taken on by the Network Working Group was to devise a common language or set of languages that those hosts could use to communicate. They called these the “host protocols.” The word protocol, a borrowing from diplomatic language, was first applied to networks by Roberts and Tom Marill in 1965, to describe both the data format and the algorithmic steps that determine how two computers communicate with one another.

The NWG, under the loose, de facto leadership of Steve Crocker of UCLA, began meeting regularly in the spring of 1969, about six months in advance of the delivery of the first IMP. Crocker was born and raised in the Los Angeles area, and attended Van Nuys High School, where he was a contemporary of two of his later NWG collaborators, Vint Cerf and Jon Postel4. In order to record the outcome of some of the group’s early discussions, Crocker developed one of the keystones of the ARPANET (and future Internet) culture, the “Request for comments” (RFC). His RFC 1, published April 7, 1969 and distributed to the future ARPANET sites by postal mail, synthesized the NWG’s early discussions about how to design the host protocol software. In RFC 3, Crocker went on to define the (very loose) process for all future RFCs:

Notes are encouraged to be timely rather than polished. Philosophical positions without examples or other specifics, specific suggestions or implementation techniques without introductory or background explication, and explicit questions without any attempted answers are all acceptable. The minimum length for a NWG note is one sentence. …we hope to promote the exchange and discussion of

considerably less than authoritative ideas.

Like a “Request for quotation” (RFQ), the standard way of requesting bids for a government contract, an RFC invited responses, but unlike the RFQ, the RFC also invited dialogue. Within the distributed NWG community anyone could submit an RFC, and they could use the opportunity to elaborate on, question, or criticize a previous entry. Of course, as in any community, some opinions counted more than others, and in the early days the opinion of Crocker and his core group of collaborators counted for a great deal. In fact by July 1971, Crocker had left UCLA (while still a graduate student) to take up a position as a Program Manager at IPTO. With crucial ARPA research grants in his hands, he wielded undoubted influence, intentionally or not.

The NWG’s initial plan called for two protocols. Remote login (or Telnet) would allow one computer to act like a terminal attached to the operating system of another, extending the interactive reach of any ARPANET time-sharing system across thousands of miles to any user on the network. The file transfer protocol (FTP) would allow one computer to transfer a file, such as a useful program or data set, to or from the storage system of another. At Roberts’ urging, however, the NWG added a third basic protocol beneath those two, for establishing a basic link between two hosts. This common piece was known as the Network Control Program (NCP). The network now had three conceptual layers of abstraction – the packet subnet controlled by the IMPs at the bottom, the host-to-host connection provided by NCP in the middle, and application protocols (FTP and Telnet) at the top.

The Failure?

It took until August of 1971 for NCP to be fully defined and implemented across the network, which by then comprised fifteen sites. Telnet implementations followed shortly thereafter, with the first stable definition of FTP arriving a year behind, in the summer of 1972. If we consider the state of ARPANET in this time period, some three years after it was first brought on-line, it would have to be considered a failure when measured against the resource-sharing dream envisioned by Licklider and carried into practical action by his protégé, Robert Taylor.

To begin with, it was hard to even find out what resources existed on the network which one could borrow. The Network Information Center used a model of voluntary contribution – each site was expected to provided up-to-date information about its own data and programs. Although it would have collectively benefited the community for everyone to do so, each individual site had little incentive to advertise its resources and make them accessible, much less provide up-to-date documentation or consultation. Thus the NIC largely failed to serve as an effective network directory. Probably it’s most important function in those early years was to provide electronic hosting for the growing corpus of RFCs.

Even if Alice at UCLA knew about a useful resource at MIT, however, an even more serious obstacle intervened. Telnet would get Alice to the log-in screen at MIT but no further. For Alice to actually access any program on the MIT host, she would have to make an off-line agreement with MIT to get an account on their computer, usually requiring her to fill out paperwork at both institutions and arrange for funding to pay MIT for the computer resources used. Finally, incompatibilities between hardware and system software at each site meant that there was often little value to file transfer, since you couldn’t execute programs from remote sites on your own computer.

Ironically, the most notable early successes in resource sharing were not in the domain of interactive time-sharing that ARPANET was built to support, but in large-scale, old-school, non-interactive data-processing. UCLA added their underutilized IBM 360/91 batch-processing machine to the network and provided consultation by telephone to support remote users, and thus managed to significantly supplement the income of the computer center. The ARPA-funded ILLIAC IV supercomputer at the University of Illinois and the Datacomputer at the Computer Corporation of America in Cambridge also found some remote clients on ARPANET.5

None of these applications, however, came close to fully utilizing the network. In the fall of 1971, with fifteen host computers online, the network in total carried about 45 million bits of traffic per site per day, an average of 520 bits-per-second on a network of AT&T leased lines with a capacity of 50,000 bits-per-second each.6 Moreover, much of this was test traffic generated by the Network Measurement Center at UCLA. The enthusiasm of a few early adopters aside (such as Steve Carr, who made daily use of the PDP-10 at the University of Utah from Palo Alto7), not much was happening on ARPANET.8

But ARPANET was soon saved from any possible accusations of stagnation by yet a third application protocol, a little something called email.

[previous] [next]

Further Reading

Janet Abbate, Inventing the Internet (1999)

Katie Hafner and Matthew Lyon, Where Wizards Stay Up Late: The Origins of the Internet (1996)

- Each IMP ended up costing ARPA $45,000, roughly $314,000 in 2019 dollars. ↩

- Severo Ornstein, Computing in the Middle Ages (2002); Judy O’Neill, “An Interview with William Crowther,” March 12, 1990; J.W. Craig, et. al., “The Lincoln Experimental Terminal”, Lincoln Laboratory, March 21, 1967. ↩

- F.E. Heart, et. al., “The Interface Message Processor for the ARPA Computer Network”, Spring Joint Computer Conference, 1970. ↩

- Crocker and Cerf were actually close friends. ↩

- Abbate, Inventing the Internet, 97-98. ↩

- Hafner and Lyon, Where Wizards Stay Up Late, 175. ↩

- S. Crocker, S. Carr, and V. Cerf, RFC 33, “New HOST-HOST Protocol.” ↩

- Perhaps the most interesting thing happening, from the present-day point-of-view, was the launching of the digital library Project Gutenberg in December 1971, by University of Illinois student Michael S. Hart. ↩

Correction: Wes Clark was at Washington University in St. Louis (wustl.edu), not “University of Washington in St. Louis”

LikeLike

Thanks, fixed!

LikeLike

“Such sharing had manifest disadvantages (loss of a precious resource), while its potential advantages remained uncertain and obscure. while the disadvantages were manifest”

Something needs to go.

LikeLike

Fixed, thanks.

LikeLike

“Moreover, the core of the team assembled by BBN to build the IMP”

to build a network based on IMP?

LikeLike

I’m happy with it as is, their main job was to build the IMP itself, the only other network component (besides the hosts) were lines leased from AT&T.

LikeLike