By the end of 1966, Robert Taylor, had set in motion a project to interlink the many computers funded by ARPA, a project inspired by the “intergalactic network” vision of J.C.R. Licklider.

Taylor put the responsibility for executing that project into the capable hands of Larry Roberts. Over the following year, Roberts made several crucial decisions which would reverberate through the technical architecture and culture of ARPANET and its successors, in some cases for decades to come. The first of these in importance, though not in chronology, was to determine the mechanism by which messages would be routed from one computer to another.

The Problem

If computer A wants to send a message to computer B, how does the message find its way from the one to the other? In theory, one could allow any node in a communications network to communicate with any other node by linking every such pair with its own dedicated cable. To communicate with B, A would simply send a message over the outgoing cable that connects to B. Such a network is termed fully-connected. At any significant size, however, this approach quickly becomes impractical, since the number of connections necessary increases with the square of the number of nodes.1

Instead, some means is needed for routing a message, upon arrival at some intermediate node, on toward its final destination. As of the early 1960s, two basic approaches to this problem were known. The first was store-and-forward message switching. This was the approach used by the telegraph system. When a message arrived at an intermediate location, it was temporarily stored there (typically in the form of paper tape) until it could be re-transmitted out to its destination, or another switching center closer to that destination.

Then the telephone appeared, and a new approach was required. A multiple-minute delay for each utterance in a telephone call to be transcribed and routed to its destination would result in an experience rather like trying to converse with someone on Mars. Instead the telephone system used circuit switching. The caller began each telephone call by sending a special message indicating whom they were trying to reach. At first this was done by speaking to a human operator, later by dialing a number which was processed by automatic switching equipment. The operator or equipment established a dedicated electric circuit between caller and callee. In the case of a long-distance call, this might take several hops through intermediate switching centers. Once this circuit was completed, the actual telephone call could begin, and that circuit was held open until one party or the other terminated the call by hanging up.

The data links that would be used in ARPANET to connect time-shared computers partook of qualities of both the telegraph and the telephone. On the one hand, data messages came in discrete bursts, like the telegraph, unlike the continuous conversation of a telephone. But these messages could come in a variety of sizes for a variety of purposes, from console commands only a few characters long to large data files being transferred from one computer to another. If the latter suffered some delays in arriving at their destination, no one would particularly mind. But remote interactivity required very fast response times, rather like a telephone call.

One important difference between computer data networks and bout the telephone and the telegraph was the error-sensitivity of machine-processed data. A single character in a telegram changed or lost in transmission, or a fragment of a word dropped in a telephone conversation, were matters unlikely to seriously impair human-to-human communication. But if noise on the line flipped a single bit from 0 to 1 in a command to a remote computer, that could entirely change the meaning of that command. Therefore every message would have to be checked for errors, and re-transmitted if any were found. Such repetition would be very costly for large messages, which would be all the more likely to be disrupted by errors, since they took longer to transmit.

A solution to these problems was arrived at independently on two different occasions in the 1960s, but the later instance was the first to come to the attention of Larry Roberts and ARPA.

The Encounter

In the fall of 1967, Roberts arrived in Gatlinburg, Tennessee, hard by the forested peaks of the Great Smoky Mountains, to deliver a paper on ARPA’s networking plans. Almost a year into his stint at the Information Processing Technology Office (IPTO), many areas of the network design were still hazy, among them the solution to the routing problem. Other than a vague mention of blocks and block size, the only reference to it in Roberts’ paper is in a brief and rather noncommittal passage at the very end: “It has proven necessary to hold a line which is being used intermittently to obtain the one-tenth to one second response time required for interactive work. This is very wasteful of the line and unless faster dial up times become available, message switching and concentration will be very important to network participants.”2 Evidently, Roberts had still not entirely decided whether to abandon the approach he had used in 1965 with Tom Marrill, that is to say, connecting computers over the circuit-switched telephone network via an auto-dialer.

Coincidentally, however, someone else was attending the same symposium with a much better thought-out idea of how to solve the problem of routing in data networks. Roger Scantlebury had crossed the Atlantic, from the British National Physical Laboratory (NPL), to present his own paper. Scantlebury took Roberts aside after hearing his talk, and told him all about something called packet-switching. It was a technique his supervisor at the NPL, Donald Davies had developed. Davies’ story and achievements are not generally well-known in the U.S, although in the fall of 1967, Davies’ group at the NPL was at least a year ahead of ARPA in its thinking.

Davies, like many early pioneers of electronic computing, had trained as a physicist. He graduated from Imperial College, London in 1943, when he was only 19 years old, and was immediately drafted into the “Tube Alloy” program – Britain’s code name for its nuclear weapons project. There he was responsible for supervising a group of human computers, using mechanical and electric calculators to crank out numerical solutions to problems in nuclear fission.3 After the war, he learned from the mathematician John Womersley about a project he was supervising out at the NPL, to build an electronic computer that would perform the same kinds of calculations at vastly greater speed. The computer, designed by Alan Turing, was called ACE, for “automatic computing engine.”

Davies was sold, and got himself hired at NPL as quickly as he could. After contributing to the detailed design and construction of the ACE machine, he remained heavily involved in computing as a research leader at NPL. He happened in 1965 to be in the United States for a professional meeting in that capacity, and used the occasion to visit several major time-sharing sites to see what all the buzz was about. In the British computing community time-sharing in the American sense of sharing a computer interactively among multiple users was unknown. Instead, time-sharing meant splitting a computer’s workload across multiple batch-processing programs (to allow, for example, one program to proceed while another was blocked reading from a tape).4 Davies’ travels took him to Project MAC at MIT, RAND Corporation’s JOSS Project in California, and the Dartmouth Time-Sharing System in New Hampshire. On the way home one of his colleagues suggested they hold a seminar on time-sharing to inform the British computing community about the new techniques that they had learned about in the U.S. Davies agreed, and played host to a number of major figures in American computing, among them Fernando Corbató (creator of the Compatible Time-Sharing System at MIT), and Larry Roberts himself.

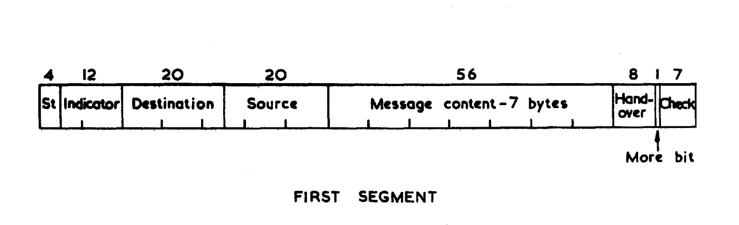

During the seminar (or perhaps immediately after), Davies was struck with the notion that the time-sharing philosophy could be applied to the links between computers, as well as to the computers themselves. Time-sharing computers gave each user a small time slice of the processor before switching to the next, giving each user the illusion of an interactive computer at their fingertips. Likewise, by slicing up each message into standard-sized pieces which Davies called “packets,” a single communications channel could be shared by multiple computers or multiple users of a single computer. And moreover, this would address all the aspects of data communication that were poorly served by telephone- or telegraph-style switching. A user engaged interactively at a terminal, sending short commands and receiving short responses, would not have their single-packet messages blocked behind a large file transfer, since that transfer would be broken into many packets. And any corruption in such large messages would only affect a single packet, which could easily be re-transmitted to complete the message.

Davies wrote up his ideas in an unpublished 1966 paper, entitled “Proposal for a Digital Communication Network.” The most advanced telephone networks were then on the verge of computerizing their switching systems, and Davies proposed building packet-switching into that next-generation telephone network, thereby creating a single wide-band communications network that could serve a wide variety of uses, from ordinary telephone calls to remote computer access. By this time Davies had been promoted to Superintendent of NPL, and he formed a data communications group under Scantlebury to flesh out his design and build a working demonstration.

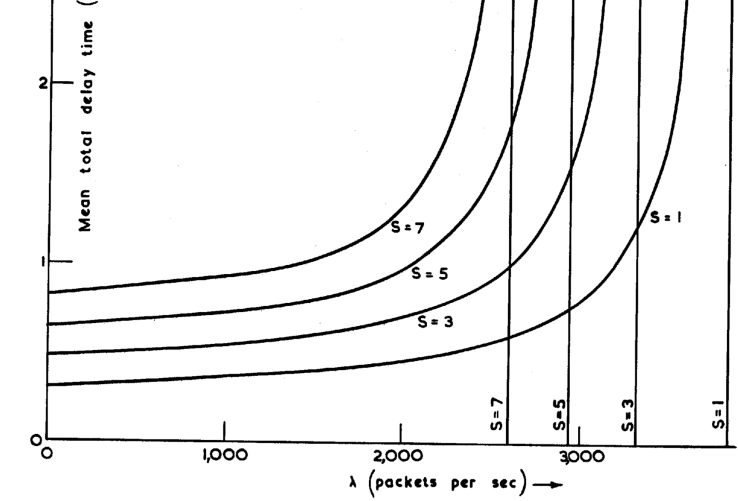

Over the year leading up to the Gatlinburg conference, Scantlebury’s team had thus worked out details of how to build a packet-switching network. The failure of a switching node could be dealt with by adaptive routing with multiple paths to the destination, and the failure of an individual packet by re-transmission. Simulation and analysis indicated an optimal packet size of around 1000 bytes – much smaller and the loss of bandwidth from the header metadata required on each packet became too costly, much larger and the response times for interactive users would be impaired too often by large messages.

Meanwhile, Davies’ and Scantlebury’s literature search turned up a series of detailed research papers by an American who had come up with roughly the same idea, several years earlier. Paul Baran, an electrical engineer at RAND Corporation, had not been thinking at all about the needs of time-sharing computer users, however. RAND was a Defense Department-sponsored think tank in Santa Monica, California, created in the aftermath of World War II to carry out long-range planning and analysis of strategic problems in advance of direct military needs.[^sdc] Baran’s goal was to ward off nuclear war by building a highly robust military communications net, which could survive even a major nuclear attack. Such a network would make a Soviet preemptive strike less attractive, since it would be very hard to knock out America’s ability to respond by hitting a few key nerve centers. To that end, Baran proposed a system that would break messages into what he called message blocks, which could be independently routed across a highly-redundant mesh of communications nodes, only to be reassembled at their final destination.

[^sdc]: System Development Corporation (SDC), the primary software contractor to the SAGE system and the site of one of the first networking experiments, as discussed in the last segment, had been spun off from RAND.

ARPA had access to Baran’s voluminous RAND reports, but disconnected as they were from the context of interactive computing, their relevance to ARPANET was not obvious. Roberts and Taylor seem never to have taken notice of them. Instead, in one chance encounter, Scantlebury had provided everything to Roberts on a platter: a well-considered switching mechanism, its applicability to the problem of interactive computer networks, the RAND reference material, and even the name “packet.” The NPL’s work also convinced Roberts that higher speeds would be needed than he had contemplated to get good throughput, and so he upgraded his plans to 50 kilobits-per-second lines. For ARPANET, the fundamentals of the routing problem had been solved.5

The Networks That Weren’t

As we have seen, not one, but two parties beat ARPA to the punch on figuring out packet-switching, a technique that has proved so effective that its now the basis of effectively all communications. Why, then, was ARPANET the first significant network to actually make use of it?

The answer is fundamentally institutional. ARPA had no official mandate to build a communications network, but they did have a large number of pre-existing research sites with computers, a “loose” culture with relatively little oversight of small departments like the IPTO, and piles and piles of money. Taylor’s initial 1966 request for ARPANET came to $1 million, and Roberts continued to spend that much or more in every year from 1969 onward to build and operate the network6. Yet for ARPA as a whole this amount of money was pocket change, and so none of his superiors worried too much about what Roberts was doing with it, so long as it could be vaguely justified as related to national defense.

By contrast, Baran at RAND had no means or authority to actually do anything. His work was pure research and analysis, which might be applied by the military services, if they desired to do so. In 1965, RAND did recommend his system to the Air Force, which agreed that Baran’s design was viable. But the implementation fell within the purview of the Defense Communications Agency, who had no real understanding of digital communications. Baran convinced his superiors at RAND that it would be better to withdraw the proposal than allow a botched implementation to sully the reputation of distributed digital communication.

Davies, as Superintendent of the NPL, had rather more executive authority than Baran, but a more limited budget than ARPA, and no pre-existing social and technical network of research computer sites. He was able to build a prototype local packet-switching “network” (it had only one node, but many terminals) at NPL in the late 1960s, with a modest budget of £120,000 pounds over three years.7 ARPANET spent roughly half that on annual operational and maintenance costs alone at each of its many network sites, excluding the initial investment in hardware and software.8 The organization that would have had the power to build a large-scale British packet-switching network was the post office, which operated the country’s telecommunications networks in addition to its traditional postal system. Davies managed to interest a few influential post office officials in his ideas for a unified, national digital network, but to change the momentum of such a large system was beyond his power.

Licklider, through a combination of luck and planning, had found the perfect hothouse for his intergalactic network to blossom in. That is not to say that everything except for the packet-switching concept was a mere matter of money. Execution matters, too. Moreover, several other important design decisions defined the character of ARPANET. The next we will consider is how responsibilities would be divided between the host computers sending and receiving a message, versus the network over which they sent it.

Further Reading

Janet Abbate, Inventing the Internet (1999)

Katie Hafner and Matthew Lyon, Where Wizards Stay Up Late (1996)

Leonard Kleinrock, “An Early History of the Internet,” IEEE Communications Magazine (August 2010)

Arthur Norberg and Julie O’Neill, Transforming Computer Technology: Information Processing for the Pentagon, 1962-1986 (1996)

M. Mitchell Waldrop, The Dream Machine: J.C.R. Licklider and the Revolution That Made Computing Personal (2001)

- Precisely speaking, (n2 + n) / 2 ↩

- Lawrence G. Roberts, “Multiple Computer Networks and Intercomputer Communication,” Proceedings of the First ACM Symposium on Operating System Principles, 1967. ↩

- He was supervised by German expatriate Klaus Fuchs, who was by that time already passing nuclear secrets on to the Soviets. ↩

- The British meaning of time-sharing would later be called multi-programming. ↩

- The above description of how packet-switching came to be is the most widely-accepted one. However, there is an alternative version. Roberts claimed in later years that by the time of the Gatlinburg symposium, he already had the basic concepts of packet-switching well in mind, and that they originated with his old colleague Len Kleinrock, who had written about them as early as 1962, as part of his Ph.D. research on communication nets. It requires a great deal of squinting to extract anything resembling packet-switching from Kleinrock’s work, however, and no other contemporary textual evidence that I have come across backs the Kleinrock/Roberts account. ↩

- Baran, et. al., “ARPANET Management Study,” Cabledata Associates (1974). ↩

- Martin Campbell-Kelly, “Oral history interview with Donald W. Davies,” 1986. ↩

- Baran, et. al., “ARPANET Management Study,” Cabledata Associates (1974). ↩

[…] Read More […]

LikeLike

[…] [previous] [next] […]

LikeLike

[…] ARPANET circa 1967. Every node on this diagram was both a pc at a college similar to UC Berkeley, Stanford, UCLA, Michigan, Carnegie Mellon, or MIT. Source. […]

LikeLike

[…] ARPANET circa 1967. Every node on this diagram was both a pc at a college similar to UC Berkeley, Stanford, UCLA, Michigan, Carnegie Mellon, or MIT. Source. […]

LikeLike