As we saw in the last installment, the search by radio and telephone engineers for more powerful amplifiers opened a new technological vista that quickly acquired the name electronics. An electronic amplifier could easily be converted into a digital switch, but one with vastly greater speed than its electro-mechanical cousin, the telephone relay. Due to its lack of mechanical parts, a vacuum tube could switch on or off in a microsecond or less, rather than the ten milliseconds or more required by a relay.

Between 1939 and 1945, three computers were built on the basis of these new electronic components. It is no coincidence that the dates of construction of these machines lie neatly within the the period of the Second World War. This conflict — unparalleled in history in the degree to which it yoked entire peoples, body and mind, to the chariot of war — permanently transformed the relationship between states on the one hand, and science and technology on the other, and brought forth a vast array of new devices.

The story of each of these first three electronic computers is entangled with that of the war. One, dedicated to the decryption of German communications, remained shrouded in secrecy until the 1970s, when it was far too late to be of any but historical interest. Another, a machine most readers will have heard of, was ENIAC, a military calculator finished too late to aid the war effort. But here we will consider the earliest of the three machines, the brainchild of one John Vincent Atanasoff.

Atanasoff

In 1930, John Atanasoff, the American-born son of an immigrant from Ottoman Bulgaria, finally achieved his youthful dream of becoming a theoretical physicist. As with most such dreams, however, the reality was not all that might be hoped for. In particular, like most students in engineering and the physical sciences in the first half of the twentieth century, Atanasoff had to endure the grinding burden of constant calculation. His dissertation at the University of Wisconsin, on the polarization of helium, required eight tedious weeks of computation to complete, with the aid of a mechanical desktop calculator.

By 1935, now well settled in as a professor at Iowa State University, Atansoff decided to do something about that burden. He began to consider all the possible ways he could build a new, more powerful kind of computing machine. After rejecting analog methods (like the MIT differential analyzer) as too limited and imprecise, he eventually decided he would build a digital machine, one that represented numbers as discrete values rather than continuous measurements. He was familiar with base-two arithmetic from his youth, and saw that it mapped much more neatly onto the on-off structure of a digital switch than the familiar decimal numbers. So he also decided to make a binary machine. Finally, he decided that in order to be as fast and flexible as possible, his machine would need to be electronic, using vacuum tubes to perform calculations.

Atanasoff also needed to decide on a problem space – what exactly would his computer be designed to do? He eventually decided that it would solve systems of linear equations, by reducing them steadily down to equations of a single variable (using an algorithm called Guassian elimination) – the same kind of calculation that dominated his dissertation work. It would support up to thirty equations each with up to thirty variables. Such a computer could solve problems of importance to scientists and engineers, while not, it seemed, being impossibly complex to design.

The State of the Art

By the mid-1930s, electronic technology had diversified tremendously from its origins some twenty-five years earlier, and two developments were especially relevant to Atanasoff’s project: the trigger relay, and the electronic counter.

Since the nineteenth century, telegraph and telephone engineers had had access to a handy little contrivance called the latch. A latch is a bistable relay that uses permanent magnets to hold it in whatever state that you left it – open or closed – until it receives an electric signal to switch states. But vacuum tubes could not do this. They had no mechanical component, and were only ‘closed’ or ‘open’ insofar as electricity was currently flowing to the grid or not. In 1918, however, two British physicists, William Eccles and Frank Jordan, wired together two tubes in such a way as to create what they called a “trigger relay” – an electronic relay that would stay on indefinitely once triggered by an initial impulse. Eccles and Jordan created their new circuit for telecommunications purposes, on behalf of the British Admiralty at the tail end of the Great War. But the Eccles-Jordan circuit (later known as a flip-flop) could also be seen as storing a binary digit – a 1 when transmitting a signal, otherwise a 0. Thus n flip-flops could represent an n-digit binary number.

About a decade after the flip-flop came the second major advance in electronics that impinged on the world of computing: electronic counters. Once again, as so often in the early history of computing, tedium was the mother of invention. Physicists studying the radiation of sub-atomic particles found themselves having to either listen to clicks or stare at photographic records for hours on end in order to to measure the particle emission rate of various substances by counting detection events. Mechanical or electro-mechanical counters held out the tantalizing possibility of relief, but moved too slowly: they could not possibly register multiple events that occurred, say, a millisecond apart.

The pivotal figure in solving this problem was Eryl Wynn-Williams, who worked under Ernest Rutherford at the Cavendish Laboratory in Cambridge. Wynn-Williams was handy with electronics, and had already used tubes (valves, in British parlance) to build amplifiers for making particle events audible. In the early 1930s, he realized that valves could also be used to create what he called a “scale-of-two” counter; that is to say, a binary counter. It was, in essence a series of flip-flops, with the added ability to ripple carries upward through the chain.1

Wynn-Williams’ counter quickly became part of the essential laboratory apparatus for anyone involved in nuclear physics. Physicists built very small counters, with perhaps three binary digits (i.e., able to count up to seven). That was sufficient to buffer a slower mechanical counter, capturing the closely spaced events that the slower-moving mechanical parts alone would miss.2 But in theory such counters could be extended to capture numbers of arbitrary size or precision. They were, strictly speaking, the first digital, electronic computing machines.

The Atanasoff-Berry Computer

Atanasoff was familiar with all this history, and it helped to convince him of the feasibility of an electronic computer. But he would not end up directly using either scale-of-two counters or flip-flops in his design. He at first tried using slightly modified counters as the basis for the arithmetic in his machine – for what is addition but repeated counting? But for reasons that are somewhat obscure he could not make the counting circuits reliable, and had to devise his own add-subtract circuitry. The use of flip-flops for short-term storage of binary numbers was out of the question for him due to his limited budget and his ambition to handle thirty coefficients at a time. This had serious consequences, as we shall see momentarily.

By 1939, Atanasoff had completed the design of his computer. He now needed someone with suitable expertise to help him build it. He found such a person in a graduate student in Iowa State’s electrical engineering department named Clifford Berry. By the end of the year, Atanasoff and Berry had a small-scale prototype working. The following year they completed the full thirty-coefficient computer. In the 1960s, a writer who unearthed their story dubbed this machine the Atanasoff-Berry Computer (ABC), and that name has stuck. However, all the kinks were not quite worked out. In particular, the ABC had a fault in roughly one binary digit per 10,000, which would be fatal to any very large computation.

Nonetheless, here in Atanasoff and his ABC, one might locate the root and source of all modern computing. Did he not create (with Berry as his able assistant), the first binary, electronic, digital machine? Are these not the essential characteristics of the millions, nay, billions, of devices that now shape and dominate economies, societies, and cultures across the world? So the argument runs.3

But let us step back a moment. The adjectives digital and binary are not special to the ABC. For example, the Bell Complex Number Computer (CNC), developed at around the same time, was a binary, digital, electromechanical computer that could do arithmetic in the complex plane. The ABC and CNC were also similar (and dissimilar from any modern computer) insofar as both solved problems only within a limited domain, and could not take an arbitrary sequence of instructions.

This leaves electronic. But, although its mathematical innards were indeed electronic, the ABC ran at electro-mechanical speeds. Because it was not financially feasible for Atanasoff and Berry to use vacuum tubes to store thousands of binary digits, they instead used electro-mechanical components to do so. The few hundred triodes which performed the core mathematical operations of the ABC were surrounded by spinning drums and whirring punch-card machines to store intermediate values between each computation step.

Atanasoff and Berry did heroic work to read and write punched cards at tremendous speed by scorching them electrically rather than actually punching them. But this caused problems of its own: it was the punch-card apparatus that was responsible for the 1 in 10,000 error rate. Moreover, even with their best efforts, the machine could “punch” no faster than one line per second, and thus the ABC could perform only one full computation cycle per second in each of its thirty arithmetic units. For the rest of that second, the vacuum tubes sat idly tapping their fingers while the machinery churned with agonizing slowness around them. Atanasoff and Berry had harnessed a proud thoroughbred to a hay wagon.4

Work on the ABC halted by the middle of 1942, when Atanasoff and Berry enlisted into the rapidly growing American war machine, which required minds as well as bodies. Atanasoff was called to the Naval Ordnance Laboratory in Washington to lead a team developing acoustic mines. Berry married Atanasoff’s secretary and found a position at a defense contractor in California, ensuring that he would not be drafted. Atanasoff prodded Iowa State for a time to patent his creation, but to no avail. He moved on to other things after the war, and never worked seriously on computers again. The computer itself was junked in 1948 to make room for the office of a new graduate student.

Perhaps Atanasoff simply began his work too early. Relying on modest grants from the university, he was able to spend only a few thousand dollars on the ABC’s construction, and therefore frugality trumped all other concerns in his design. Had he waited until the early 1940s, he might have secured a government grant for a fully electronic device. As it was – limited in application, difficult to operate, not very reliable, and not all that fast – the ABC was not a promising advertisement for the usefulness of electronic computing. The American war machine, despite its hunger for computational labor, left the ABC to rust in Ames, Iowa.5

Computing Engines at War

The First World War primed the pump for a massive investment in science and technology at the outset of the Second. A few short years had seen the practice of war on land and sea transformed by poison gas, tanks, magnetic mines, aerial reconnaissance and bombardment, and more. No political or military leader could fail to notice such a rapid transformation. Rapid enough that a research investment seeded early enough at the onset of hostilities could give rise to new instruments of war in time to turn the tide in one’s favor.

The United States, rich in material and minds (many of them refugees from Hilter’s Europe), and standing aloof from the immediate struggle for survival and dominance faced by other nations, was able to take this lesson especially to heart. This manifested most obviously in the marshaling of tremendous industrial and intellectual resources to create the first atomic weapons. Less well-known but no less expensive or important was a massive investment in radar technology, centered especially at the MIT “Rad Lab.”

Likewise, the nascent field of automatic computing received its own windfall of wartime funding, although on a much smaller scale. We have already had occasion to notice the variety of electro-mechanical computing projects spurred by the war effort. Relay computers were a known quantity, relatively speaking, since telephone switching circuits with thousands of relays had been operating for years. Electronic components, on the other hand, had not yet been proven to work at that scale. Most experts believed that an electronic computer would be fatally unreliable (the ABC being a case in point) or take too long to build to be useful to the war. Despite the sudden availability of government money, therefore, wartime electronic computing projects were few and far between. Just three were initiated, only two of which resulted in a working machine.

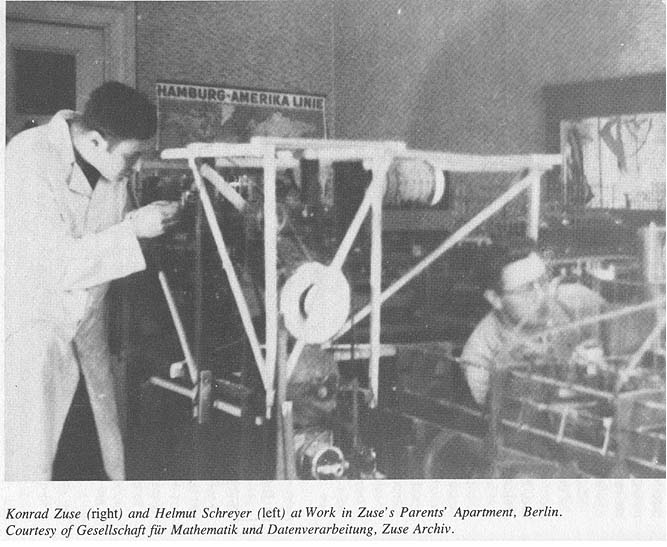

In Germany, telecommunications engineer Helmut Schreyer impressed upon his friend Konrad Zuse the value of an electronic machine, as opposed to the electromechanical “V3” that Zuse was building for the aircraft industry (later known as the Z3). Zuse eventually agreed to take on this secondary project with Schreyer, and the Research Institute for Aviation offered the funding for a 100 tube prototype in late 1941. But, preempted by higher priority war work, and later slowed by frequent air raid damage, the men never got the machine to work reliably. 6

Meanwhile, the first electronic computer to do useful work was built at a secret facility in Britain, where a telecommunications engineer proposed a radical new approach to cryptanalysis based on valves. We will pick up with that story next time.

Further Reading

Alice R. Burks and Arthur W. Burks, The First Electronic Computer: The Atansoff Story (1988)

David Ritchie, The Computer Pioneers (1986)

Jane Smiley, The man Who Invented the Computer (2010)

- In practice, Wynn-Williams did not use flip-flops but thyratons, a recently invented type of tube that contained gas rather than vacuum, and would stay on once the gas ‘ignited’ (reached an ionized state). C. E. Wynn-Williams, “The Use of Thyratrons for High Speed Automatic Counting of Physical Phenomena”, Proceedings of the Royal Society A, July 2, 1931; C. E. Wynn-Williams, “A Thyratron “Scale of Two” Automatic counter”, Proceedings of the Royal Society A, May 2, 1932. ↩

- Gareth Wynn-Williams, “Earl Wynn-Williams and the Scale-of-Two Counter”, https://www.ifa.hawaii.edu/users/wynnwill/pdf/CavMag_WW.pdf ↩

- e.g. Mollenhoff, Atanasoff: Forgotten Father of the Computer; Smiley, The Man Who Invented the Computer, Burks and Burks, The First Electronic Computer. Interestingly, Alice and Arthur Burks actually both worked at the Moore School of Engineering in the 1940s, Alice as a (human) computer and Arthur as a mathematician who helped design ENIAC. The Burks’ became alienated from Eckert and Mauchly, and allied themselves with Atanasoff, during the protracted and painful legal battle over ENIAC and EDVAC, which, unlike Jarndyce and Jarndyce, dragged on for merely a single generation. ↩

- John Gustufason, who led a project to reconstruct the ABC in the 1990s, estimated its best-case amortized computation speed, including operator time to set up the problem, as five additions or subtractions per second. This is certainly better than a human with a calculator could do, but not the kind of speed that electronic computing generally brings to mind. John Gustafson, “Reconstruction of the Atanasoff-Berry Computer,” in Raúl Rojas and Ulf Hashagen, eds., The First Computers: History and Architectures (2000). ↩

- Evidence that this was intentional rather than accidental comes in the person of Warren Weaver (who we’ve met before as the funder of the second generation differential analyzer at MIT). Weaver had taught Atanasoff mathematical physics during his doctoral studies at the University of Wisconsin, and by 1940 was part of the National Defense Research Council (NDRC), later subsumed by the Office of Scientific Research and Development (OSRD). These organizations formed the central nervous system of the wartime research effort in the United States. Atanasoff began his first wartime project, on laying anti-aircraft fire, at Weaver’s behest, and Weaver came out to Ames check up on him. Atanasoff showed him the ABC, and Weaver came away unimpressed. Smiley, 77-78; Bonnie Kaplan interview with John Atanasoff, August 16, 1972. ↩

- Helmut Schreyer, “An Experimental Model of an Electronic Computer,” Annals of the History of Computing, July-Sept. 1990. ↩

[…] in curious (and, to some, suspicious) ways with that of John Atanasoff. As you will recall, we last left Atanasoff and his assistant, Claude Berry, in 1942. Having abandoned their own electronic computer […]

LikeLike

[…] in succession, each of the first three attempts to build a digital, electronic computer: The Atanasoff-Berry Computer (ABC) conceived by John Atanasoff, the British Colossus projected headed by Tommy Flowers, and […]

LikeLike

Very interesting. never knew about the history covered in the article.

LikeLike

[…] [previous] [next] […]

LikeLike